Operationalizing Responsible Artificial Intelligence: From Ethical Principles to Technical Implementation

Article by Hira Lal Bhandari – PhD Scholar, Department of Computer Science, Deen Dayal Upadhyaya Gorakhpur University, Gorakhpur, Uttar Pradesh, India

Abstract

This article defines responsible Artificial Intelligence (RAI), makes discussion on ethical standards and principles how operationalization of RAI is governed and talks about optimization requirement of the policy to reach higher level of standards at the implementation level.

1. Introduction

Google Research presents responsible artificial intelligence (RAI) as the development of artificial intelligence by incorporating attributes like privacy, fairness and safety, as well as the regarding human aspects. Beba Cibralic et al. have defined RAI as the broad concept particularly incorporating sequential set of principles, practices and measurements which make assurance of the development of AI technologies and products considering safety, ethicality and meeting social norms and values. They have thought that computer scientist to ethics, legal professionals, UX researchers and others should obey and become responsible AI practitioners to meet the requirement.

Local Government Association states that AI model is trained on the dataset we have provided so we should be aware of the quality of data to stop from possible biases that will arouse. Similarly, data privacy is equally important preserve privacy law. The AI system should include attributes like fairness, equality and inclusion should be exposed while training data. According to IBM Technology, ethical issues does not exactly have technical challenges rather there exist socio technical challenge that should be addressed in an average so that we should think about how people process tools. We should be aware of organizational culture that is helpful to assess AI responsibility which assure correct governance process of AI and tools and AI engineering frameworks.

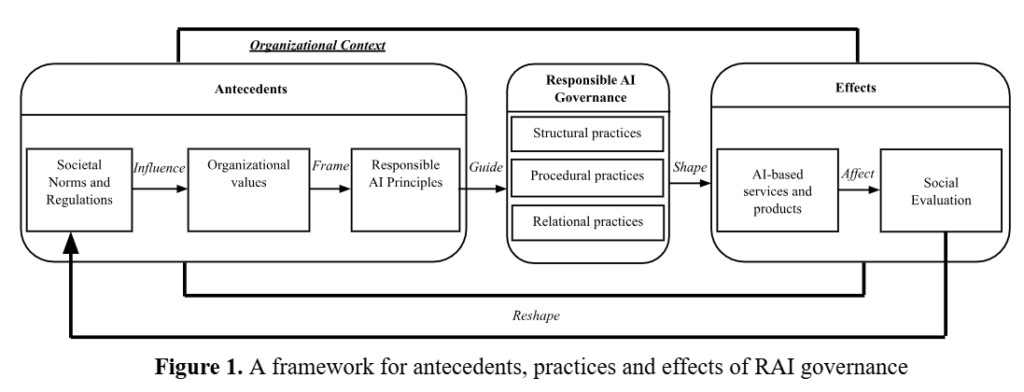

Emmanouil Papagiannidis et al. have proposed an enhanced framework that is able to assess RAI governance and portray how organizations are enabled to implement RAI principles in their AI applications. By proposing the architectural design from their synthesized study, they have included discussions on key domains for RAI governance and diagnose major antecedents and outcomes. In totality, their actionable research themes make us understand how RAI governance could be applied and acknowledge effects of RAI in the institutions and the society. They have depicted their framework as follows:

2. Responsible AI Principles

Daswin DeSilva et al. have identified seven principles for RAI and its practices in their review study. We have listed those principles here are: (a) privacy and data protection, (b) accountability, (c) transparency and explainability, (d) human agency and oversight, (e) fairness and algorithmic bias, (f) socially beneficial practice of AI and (g) robustness and reliability.

3. Operationalization Steps

Liming Zhu et al. have made discussion on the approaches and mechanisms to operationalize AI ethics instead of the handling AI ethics with high level principles and low level algorithms.

- Requirements: We should focus on ethical principles as the major part of AI system requirements instead of traditional functional and non-functional requirement.

- Design: We should propose framework addressing ethical principles instead of existing ones that intends to protect its end users and system users from the external world.

- Operations: There should assurance of validating and monitoring mechanism for checking ethical principles standard repeatedly. Every associated one should ensure clear bound for the condition which is enough to assess a standardized check.

- Governance:

• There should be maturity certification provision that should be provided after assessing an organization’s ethical maturity for the management of AI project. Different category of maturity certificates should be provided to an organization as per their reviews. This should be performed rigorously.

• Ethical principles should be assessed with internal and external reviews to diagnose ethical results. Respective representative stakeholders should better to join the reviews.

• We should build a project team insisting of diverse attributes of the team members including cultures, background and disciplines.

4. Conclusion

This study basically talks about operationalization of responsible artificial intelligence and ethical artificial intelligence by its ethical standards. This article focuses on practical implications of RAI. Major aspects of this study are:

- The study has given emphasis on the development of AI technologies and products with the inclusion of the attribute such as safety, ethicality, fairness, equality and social norms and values.

- The article has identified that ethical issues exactly do not have technical challenges rather socio technical challenges that should be considered.

- This article assesses the need of optimizing the framework for better operationalization of RAI governance and practical implementation in the AI applications.

- This study has identified seven principles for RAI and its practice mentioned in section 2. Similarly, operationalization steps for RAI have been discussed in section 3.

AI Horizon Conference

The AI Horizon Conference brought together entrepreneurs, investors and industry leaders in Lisbon to discuss key trends and shape the future of AI.

Bern

Bern

Lisbon

Lisbon

New York

New York